|

You can also run Spark SQL statements using variables in the query. The following screenshot shows the output.

settings, appear after you have selected Bar Chart, allows you to choose Keys, and Values. Select the Bar Chart icon to change the display. The %sql statement at the beginning tells the notebook to use the Livy Scala interpreter. Select buildingID, (targettemp - actualtemp) as temp_diff, date from hvac where date = "6/1/13" Also the difference between the target and actual temperatures for each building on a given date. Paste the following query in a new paragraph. You can now run Spark SQL statements on the hvac table.

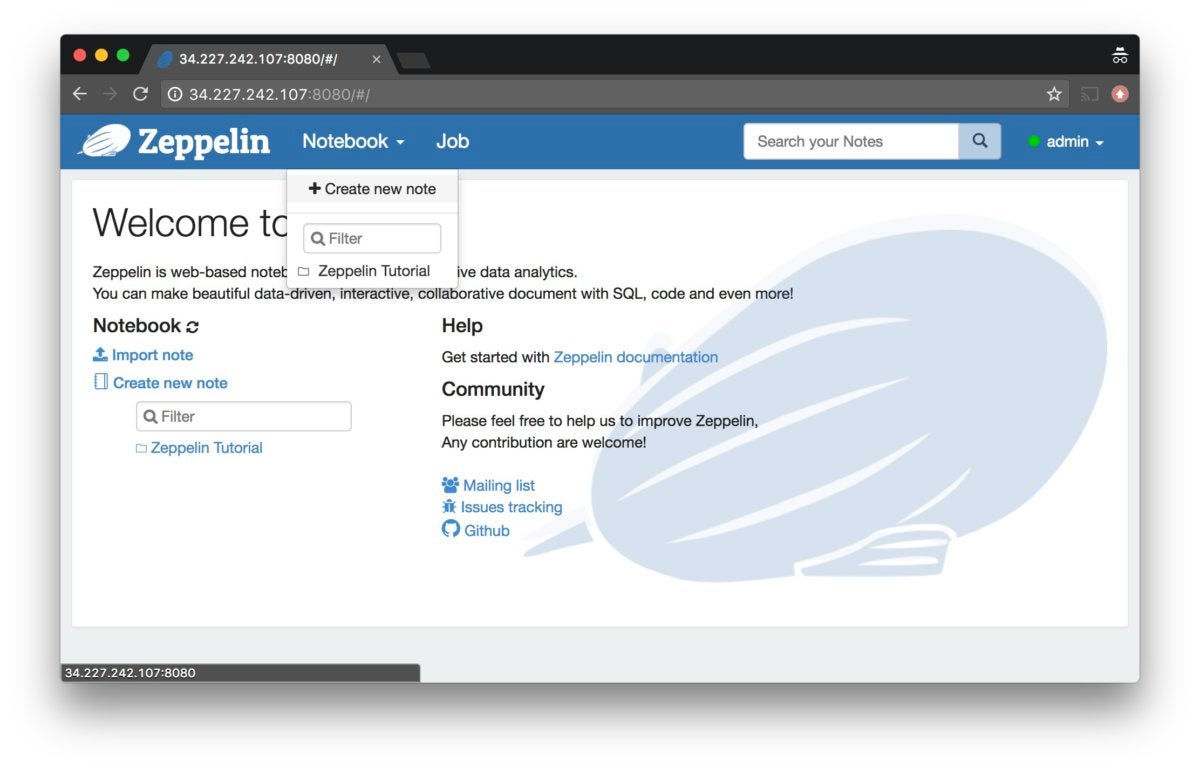

%spark2 interpreter is not supported in Zeppelin notebooks across all HDInsight versions, and %sh interpreter will not be supported from HDInsight 4.0 onwards. From the right-hand corner of the paragraph, select the Settings icon (sprocket), and then select Show title. You can also provide a title to each paragraph. The screenshot looks like the following image: The output shows up at the bottom of the same paragraph. The status on the right-corner of the paragraph should progress from READY, PENDING, RUNNING to FINISHED. Press SHIFT + ENTER or select the Play button for the paragraph to run the snippet. Register as a temporary table called "hvac" Val hvacText = sc.textFile("wasbs:///HdiSamples/HdiSamples/SensorSampleData/hvac/HVAC.csv")Ĭase class Hvac(date: String, time: String, targettemp: Integer, actualtemp: Integer, buildingID: String) Create an RDD using the default Spark context, sc The above magic instructs Zeppelin to use the Livy Scala interpreter In the empty paragraph that is created by default in the new notebook, paste the following snippet. When you create a Spark cluster in HDInsight, the sample data file, hvac.csv, is copied to the associated storage account under \HdiSamples\SensorSampleData\hvac. It's denoted by a green dot in the top-right corner. From the header pane, navigate to Notebook > Create new note.Įnter a name for the notebook, then select Create Note.Įnsure the notebook header shows a connected status. Replace CLUSTERNAME with the name of your cluster:Ĭreate a new notebook. Visualizations are not limited to SparkSQL query, any output from any language backend can be recognized and visualized.You may also reach the Zeppelin Notebook for your cluster by opening the following URL in your browser. Some basic charts are already included in Apache Zeppelin.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed